Hi, I'm Aidul

Software Developer

Looking forward to working in such an environment that would allow me to use my conceptual, technical, analytical skills and abilities which offer professional growth while being resourceful, innovative, and dynamic.

Contact MeAbout Me

My Introduction

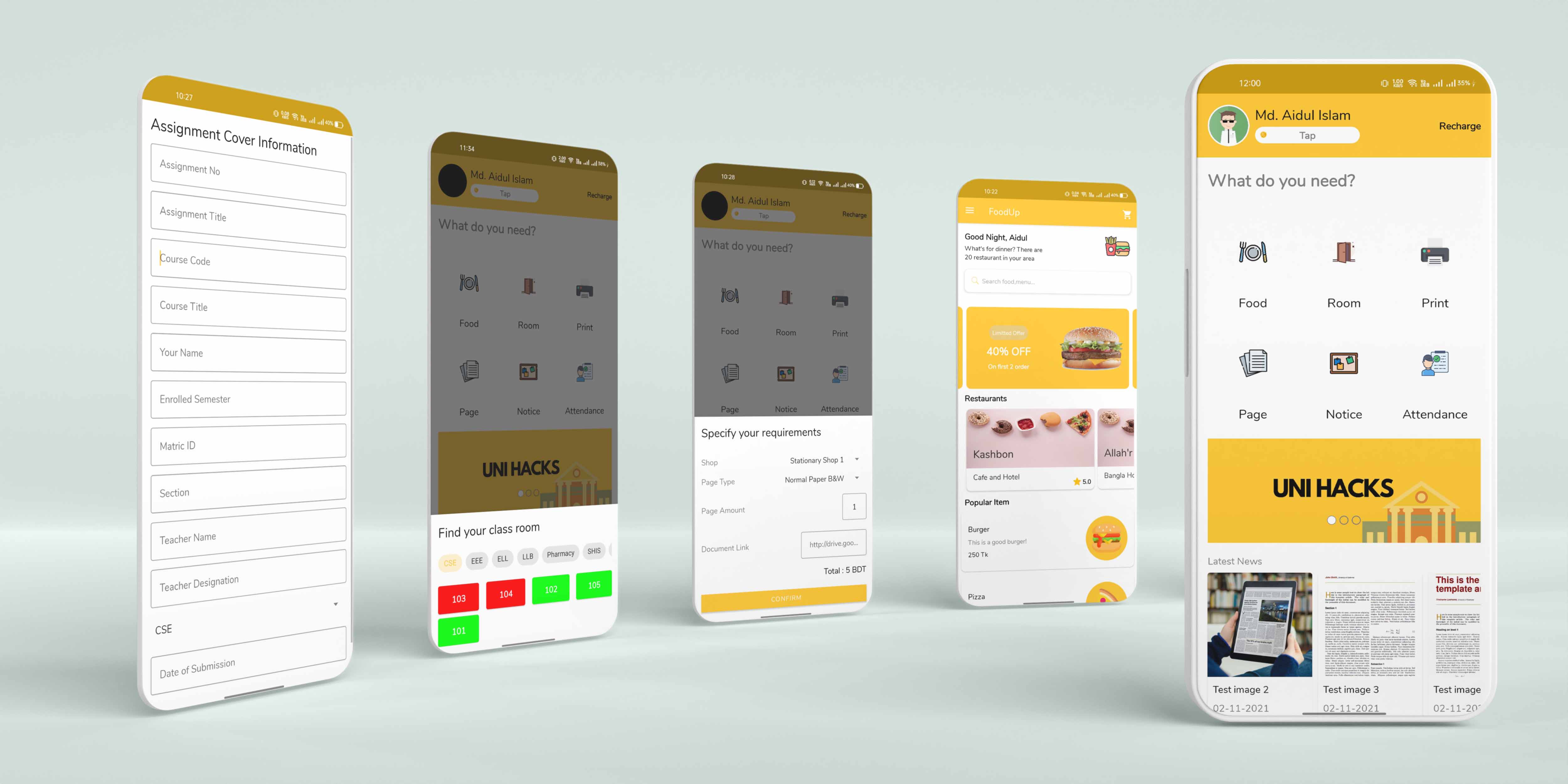

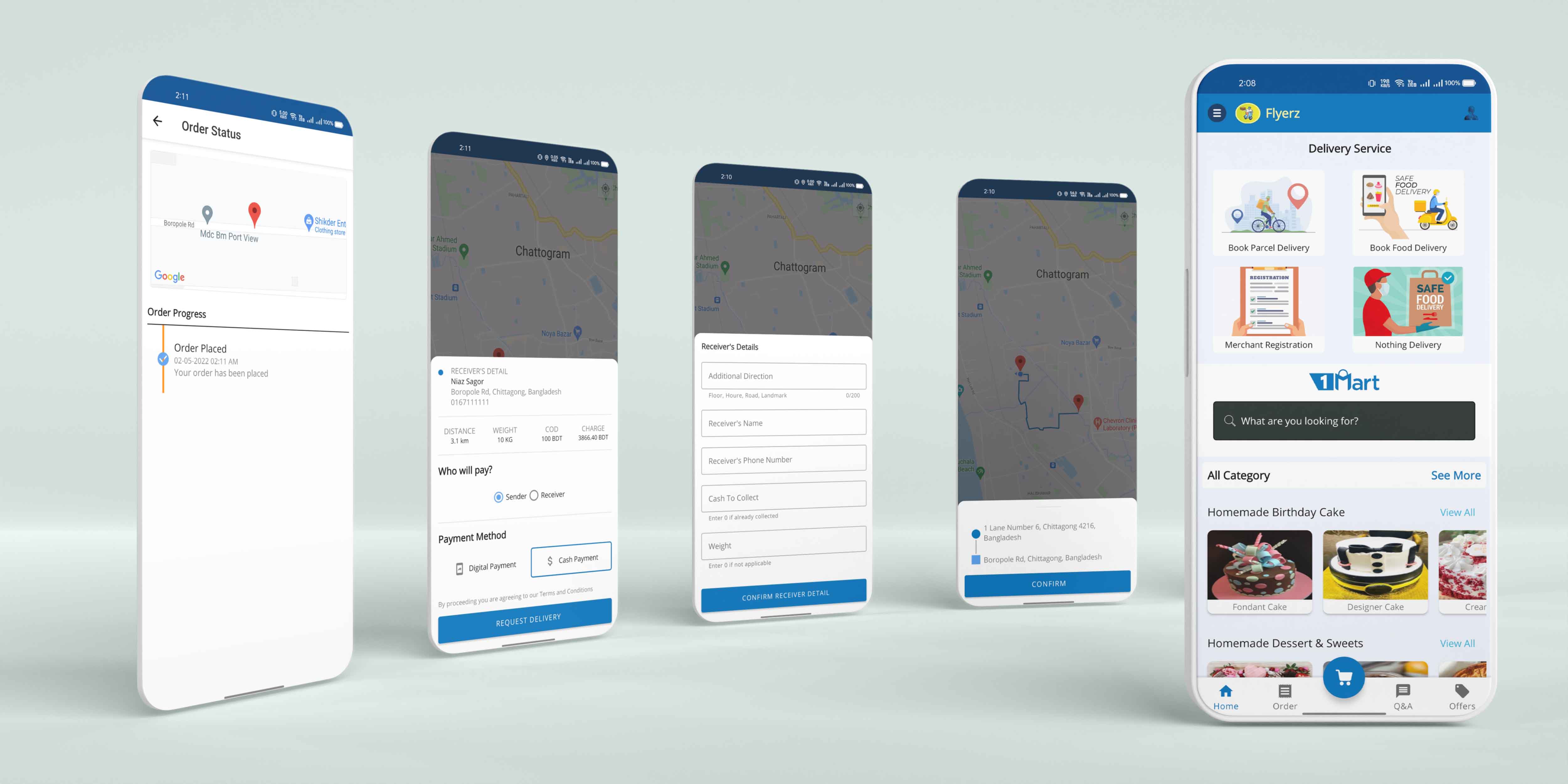

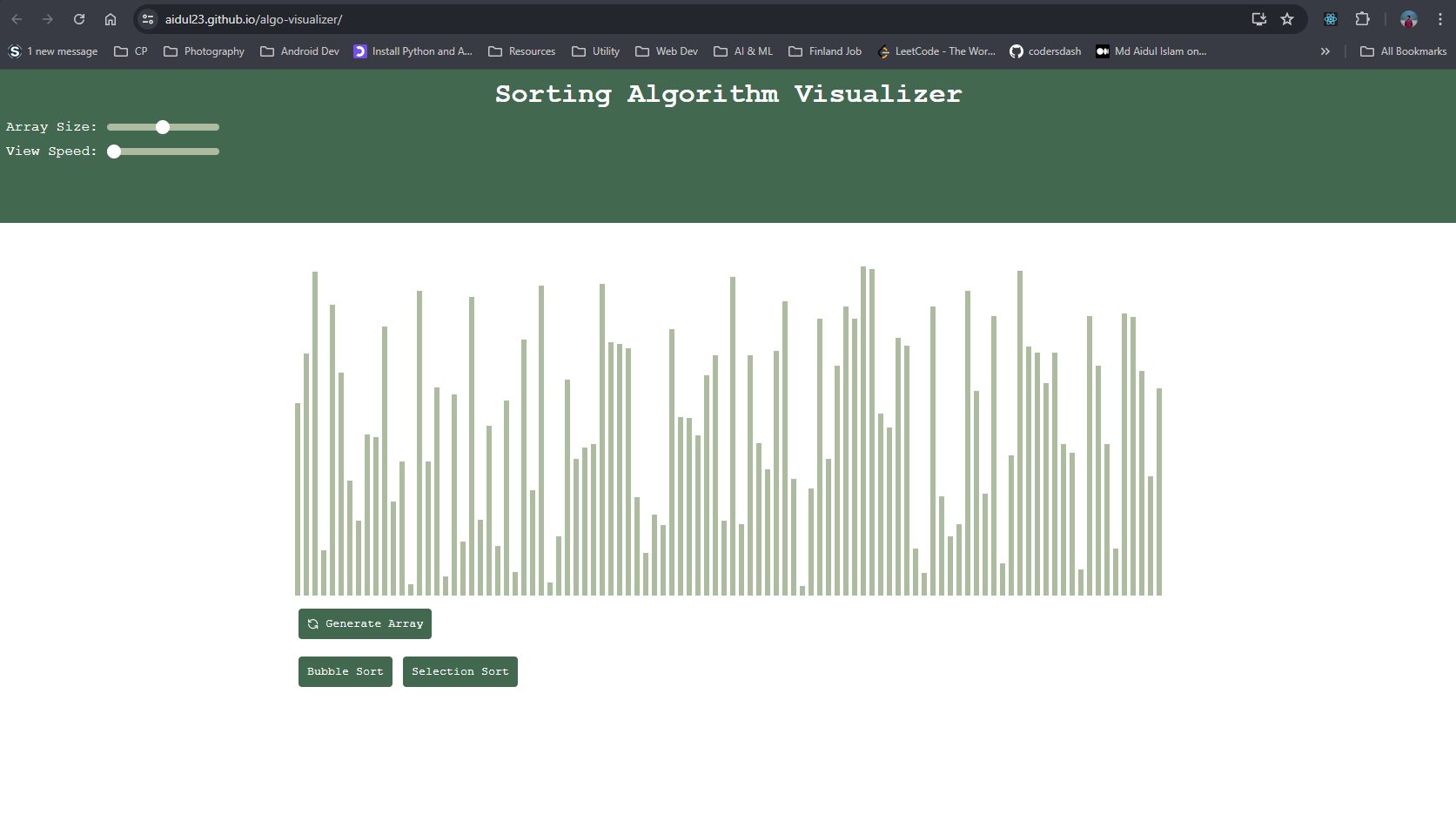

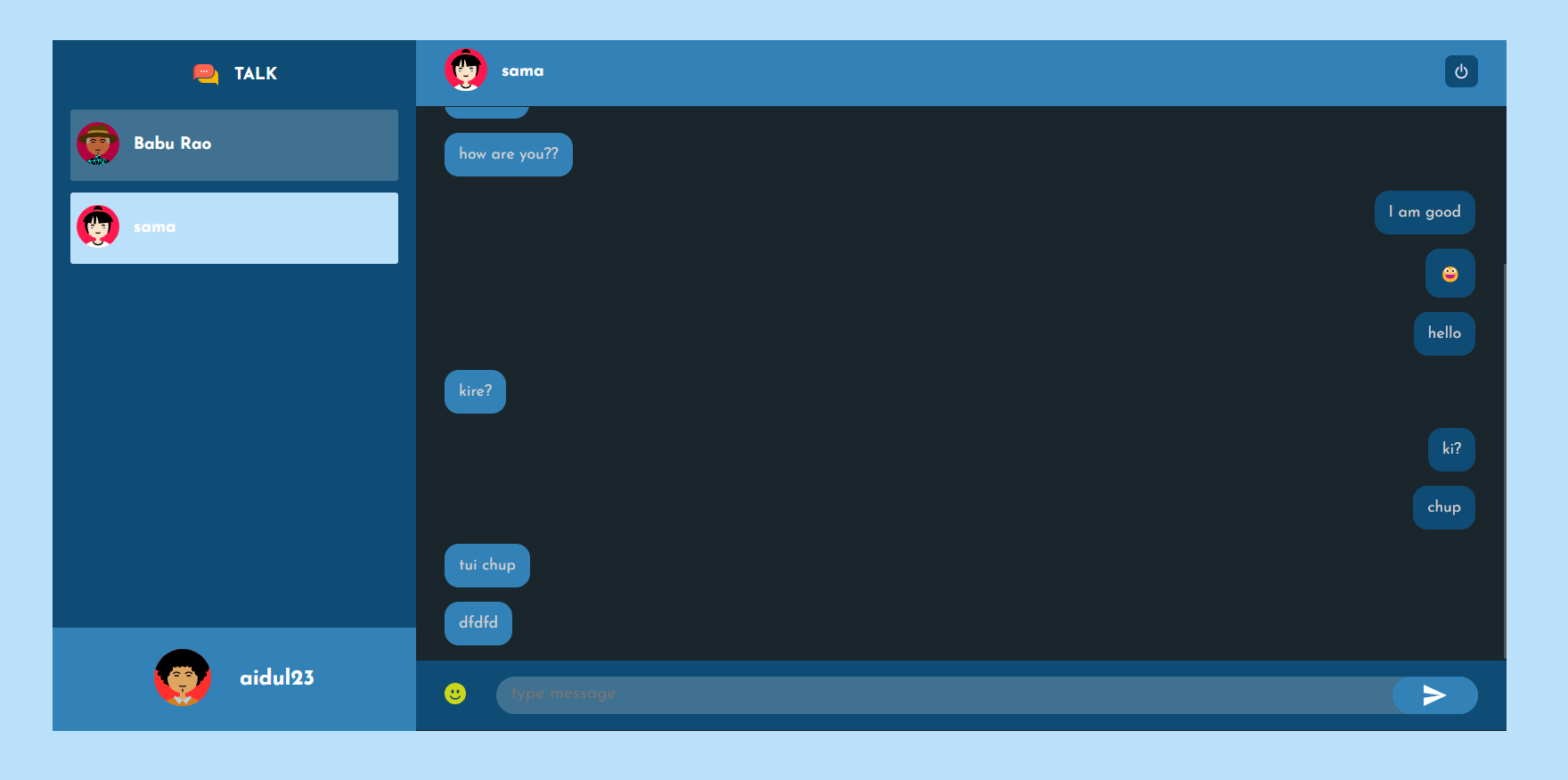

Myself Md. Aidul Islam. I've pursued my Bachelor of Science (Engg.) degree in CSE from IIUC, Bangladesh. Currently pursuing my masters in Computing Science and Software at Tampere University, Finland. Problem solving with many kinds of algorithms is an interesting topic for me. I like work with team too. I have many projects which was I, developed working with team members. I make android and web applications.

experience

project

worked in

Skills

My Technical LevelLanguages

Programming/Markup LanguagesPython

80%C++

70%Java

80%JavaScript

80%TypeScript

60%Kotlin

50%HTML5

80%CSS3

80%Operating System

Both PC & mobile

Database

Database Management

Frameworks

Both Frontend and Backend

IDEs

Development Tools

VSC

Version Control System

Career

My Career journeyM.Sc in Software, Web & Cloud, Computing Sciences

Tampere UniversityB.Sc in Computer Science & Engineering

International Islamic University ChittagongHigher Secondary School Certificate (Science)

Government City College ChittagongSecondary School Certificate (Science)

Government Muslim High School ChittagongResearch Assistant

GPT-Lab, Tampere UniversitySoftware Engineer (Part-Time)

nztripAndroid Developer

Xit BangladeshAndroid Developer Intern

BroTech ITCertification

Awards, Achievements and CertificationsProjects

Most Recent Work